MedQ-Bench: Evaluating and Exploring Medical Image Quality Assessment Abilities in MLLMs

The first comprehensive benchmark establishing a perception–reasoning paradigm for language-based evaluation of medical image quality with Multimodal LLMs

Medical Image Quality Assessment (IQA) serves as the first-mile safety gate for clinical AI, yet existing approaches remain constrained by scalar, score-based metrics and fail to reflect the descriptive, human-like reasoning process central to expert evaluation. To address this gap, this work introduces MedQ-Bench, a comprehensive benchmark that establishes a perception–reasoning paradigm for language-based evaluation of medical image quality with Multi-modal Large Language Models (MLLMs).

MedQ-Bench defines two complementary tasks: MedQ-Perception, which probes low-level perceptual capability via human-curated questions on fundamental visual attributes, and MedQ-Reasoning, encompassing both no-reference and comparison reasoning tasks that align model evaluation with human-like reasoning on image quality. The benchmark spans 5 imaging modalities (MRI, CT, endoscopy, histopathology, fundus photography) and over 40 quality attributes, totaling 2,600 perceptual queries and 708 reasoning assessments.

A rigorous evaluation of 14 state-of-the-art MLLMs — including open-source, medical-specialized, and commercial systems — demonstrates that models exhibit preliminary but unstable perceptual and reasoning skills, with insufficient accuracy for reliable clinical use. The best AI model (GPT-5) scores 68.97% on perception, significantly underperforming human experts at 82.50%, highlighting the need for targeted optimization of MLLMs in medical IQA.

Core Highlights

01 — Perception–Reasoning Evaluation Paradigm

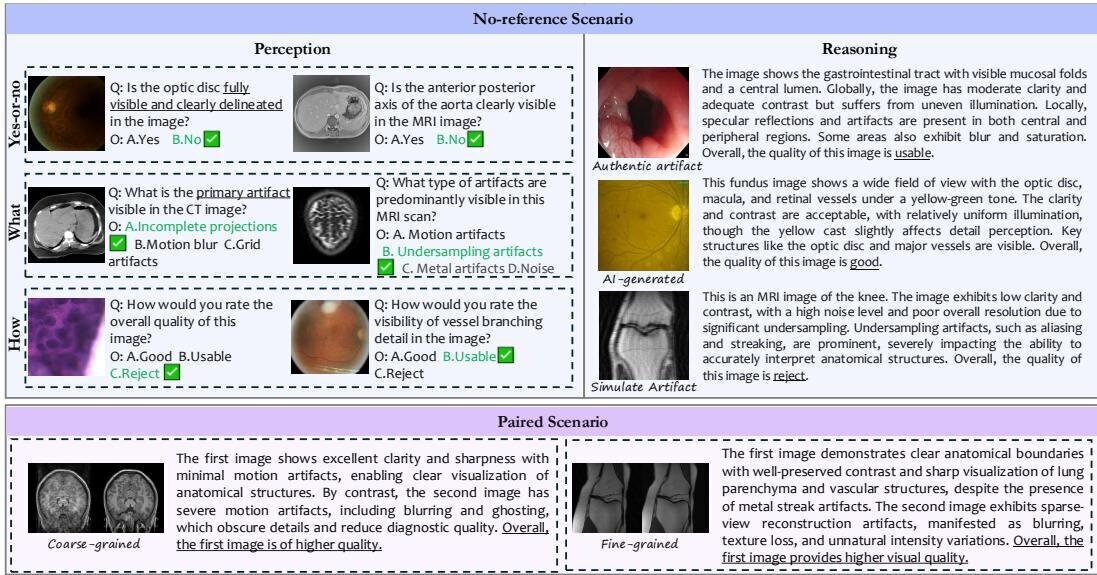

MedQ-Bench pioneers a systematic evaluation methodology that mirrors clinicians' cognitive workflow: first perceiving quality-related attributes, then reasoning about their clinical impact. MedQ-Perception evaluates direct visual perception using single-image prompts with three question types — Yes-or-No, What (degradation identification), and How (severity assessment) — organized along two axes: degradation severity levels and general vs. modality-specific questions. MedQ-Reasoning encompasses no-reference reasoning tasks requiring models to generate comprehensive quality analyses, and comparison reasoning tasks evaluating fine-grained discrimination between image pairs at both coarse-grained and fine-grained difficulty levels.

02 — Multi-Dimensional Judging Protocol with Human–AI Validation

To evaluate reasoning ability, a multi-dimensional judging protocol scores model outputs along four complementary axes: Completeness (coverage of key visual information), Preciseness (consistency with reference without contradictions), Consistency (internal logical coherence between reasoning and conclusions), and Quality Accuracy (correctness of quality comparison judgments). Human–AI alignment validation with 200 cases evaluated by three board-certified medical imaging specialists demonstrates strong alignment: 83.3% accuracy for completeness, 87.0% for preciseness, and 90.5% for consistency, with quadratic weighted Cohen's kappa values of 0.774–0.985.

03 — Comprehensive Empirical Analysis Reveals Critical Gaps

Evaluation of 14 MLLMs reveals a clear performance hierarchy: closed-source frontier models lead (GPT-5 at 68.97% perception), followed by open-source models (Qwen2.5-VL-72B at 63.14%), while medical-specialized models unexpectedly underperform expectations (MedGemma-27B at 57.16%). Mild degradation represents the most challenging detection scenario, with average accuracy dropping to 56% compared to 72% for no degradation. Even the most advanced MLLMs fail to achieve excellent scores in completeness and preciseness for reasoning tasks, with the highest scores being only 1.195/2.0 for completeness and 1.118/2.0 for preciseness. The substantial 13.53% gap between the best AI model and human experts underscores the need for targeted improvements.

MedQ-Bench establishes a clinically grounded and interpretable standard for measuring and advancing medical image quality assessment. By moving beyond high-level diagnostic reasoning toward foundational quality perceptual and reasoning skills, it reveals that current MLLMs — including both general-purpose and medical-specialized systems — possess only preliminary and unstable capabilities for this critical clinical task. The benchmark is expected to inform the development of MLLMs with stronger low-level visual understanding and trustworthy reasoning, paving the way for safe and reliable integration of automated quality control into clinical imaging workflows.

Key Contributions

- Introduced MedQ-Bench, the first comprehensive benchmark systematically evaluating medical IQA capabilities of MLLMs through a perception–reasoning paradigm spanning 5 modalities and 40+ quality attributes with 3,308 total samples.

- Designed a multi-dimensional judging protocol scoring model outputs along four complementary axes (completeness, preciseness, consistency, quality accuracy), validated through rigorous human–AI alignment achieving 83.3–90.5% accuracy.

- Constructed a clinically representative, multi-source dataset blending authentic clinical images, simulated degraded images via physics-based reconstruction, and AI-generated images for robust evaluation across realistic and controlled scenarios.

- Conducted comprehensive empirical analysis of 14 state-of-the-art MLLMs, revealing a 13.53% performance gap with human experts and that medical-specialized models unexpectedly underperform general-purpose ones, calling into question current domain adaptation strategies.

Authors

Jiyao Liu*, Jinjie Wei*, Wanying Qu, Chenglong Ma, Junzhi Ning, Yunheng Li, Ying Chen, Xinzhe Luo, Pengcheng Chen, Xin Gao, Ming Hu, Huihui Xu, Xin Wang, Shujian Gao, Dingkang Yang, Zhongying Deng, Jin Ye, Lihao Liu, Junjun He, Ningsheng Xu