Shanghai Artificial Intelligence Laboratory, China · Est. 2020

AI at the Frontier of

Medicine & Healthcare

We build world-leading AI models, agents, and tools that advance precision medicine and everyday healthcare — expanding access to high-quality diagnosis, treatment, and discovery for every clinician, patient, and community.

Shanghai Artificial Intelligence Laboratory, China

Scroll

30+

Publications

20+

Group Members

10,000+

Citations

Our North Star

A world where the best of medicine and healthcare is no longer bound by geography, institution, or privilege — where AI extends the hand of every great doctor to every person on Earth.

Our mission. Within this decade, ship general-purpose medical AI — foundation models, multi-agent systems, and open tooling — that measurably improves outcomes in real hospitals, real clinics, and the everyday health of real communities.

01

1,800+

datasets archived

100B+

tokens

361M

segmentation masks

Medical Data Infrastructure

Building the Foundation: Large-Scale Medical Data Platforms

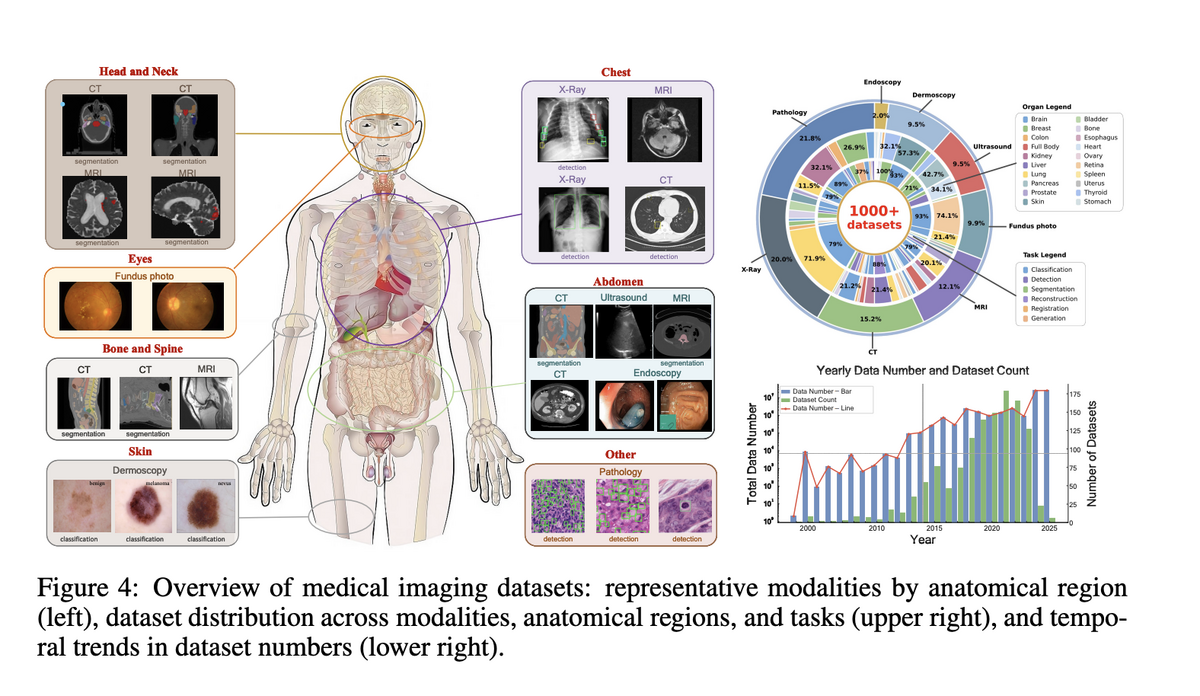

We build the data infrastructure that powers world-leading medical AI. Project

Imaging-X surveys and integrates 1,000+ open medical imaging datasets via a

Metadata-Driven Fusion Paradigm. Our private corpus exceeds 100B tokens of

biomedical text, 100M+ medical images, and 361M segmentation masks — enabling

foundation models that are truly general across modalities, tasks, and diseases.

Project Imaging-X

Metadata-driven fusion

Explore Project Imaging-X →

02

5.5M

image-text pairs

18

clinical specialties

38

imaging modalities

Medical Multimodal Large Models

General Medical Vision-Language Models: GMAI-VL and Beyond

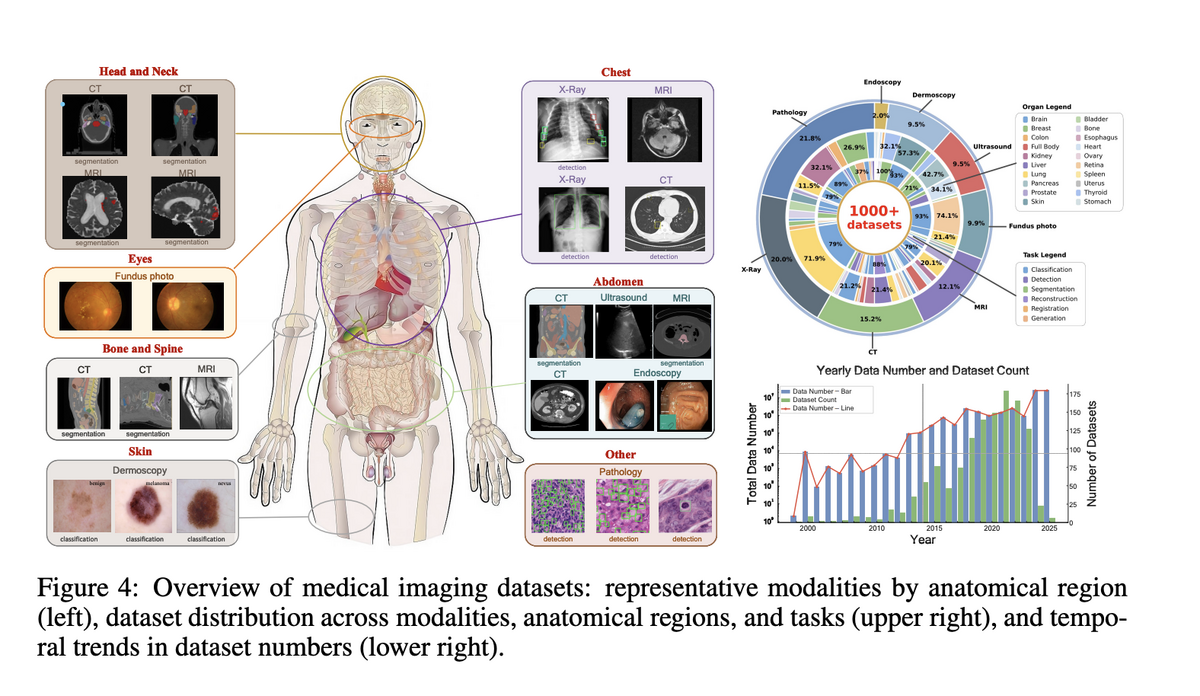

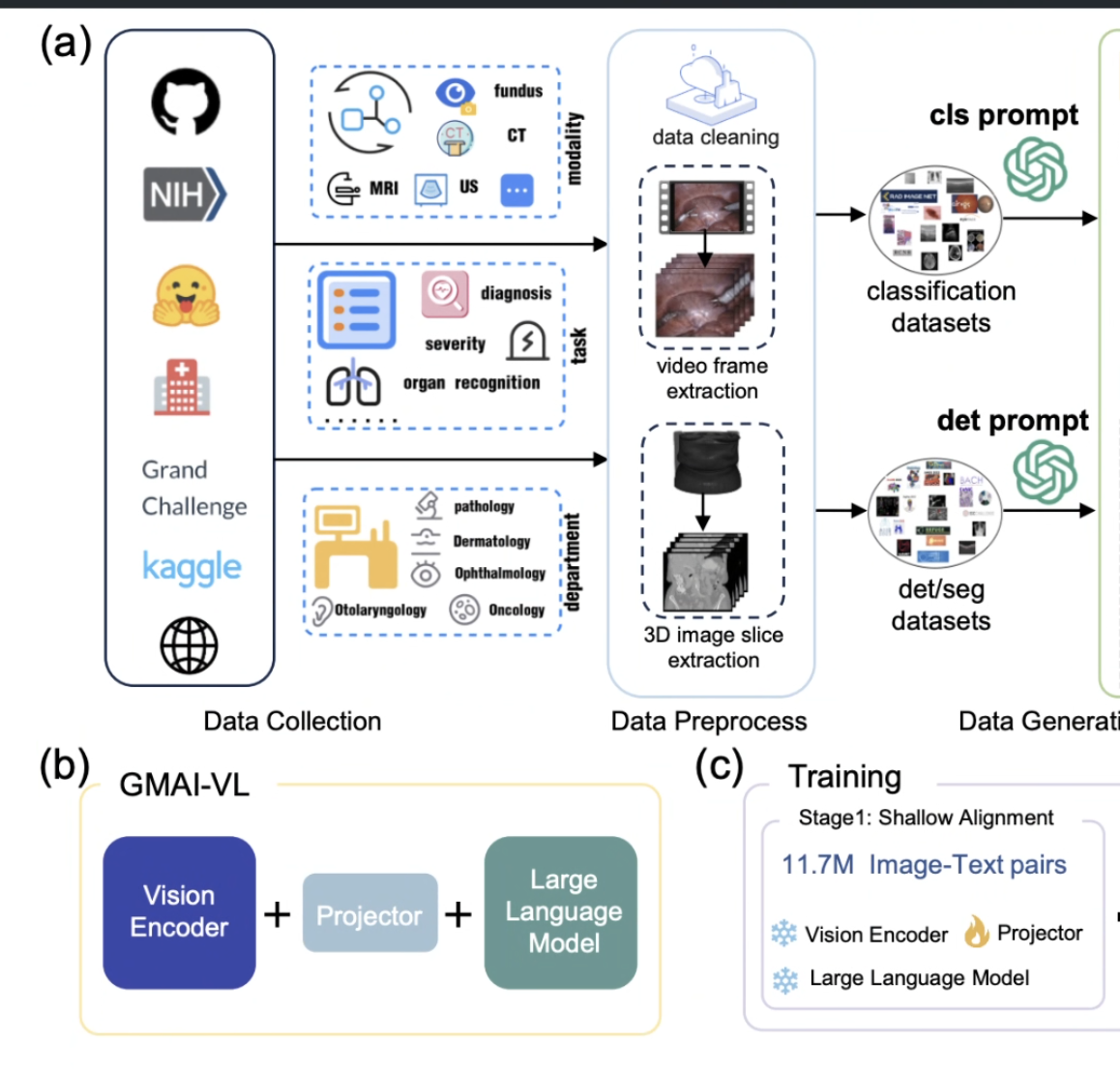

We build world-leading medical multimodal large models. GMAI-VL, trained on

5.5M image-text pairs across 18 clinical specialties, achieves SOTA on medical

VQA and diagnostic reasoning tasks. SlideChat is the first vision-language assistant

to directly understand gigapixel whole-slide pathology images. GMAI-VL-R1 introduces

reinforcement learning, improving average accuracy by ~30% across eight imaging

modalities and surpassing models 36× larger.

GMAI-VL

GMAI-VL-5.5M

GMAI-VL-R1

GMAI-MMBench

Explore GMAI-VL →

03

143K

3D masks (SA-Med3D)

247

anatomy classes

SOTA

3D segmentation

Medical Image Segmentation

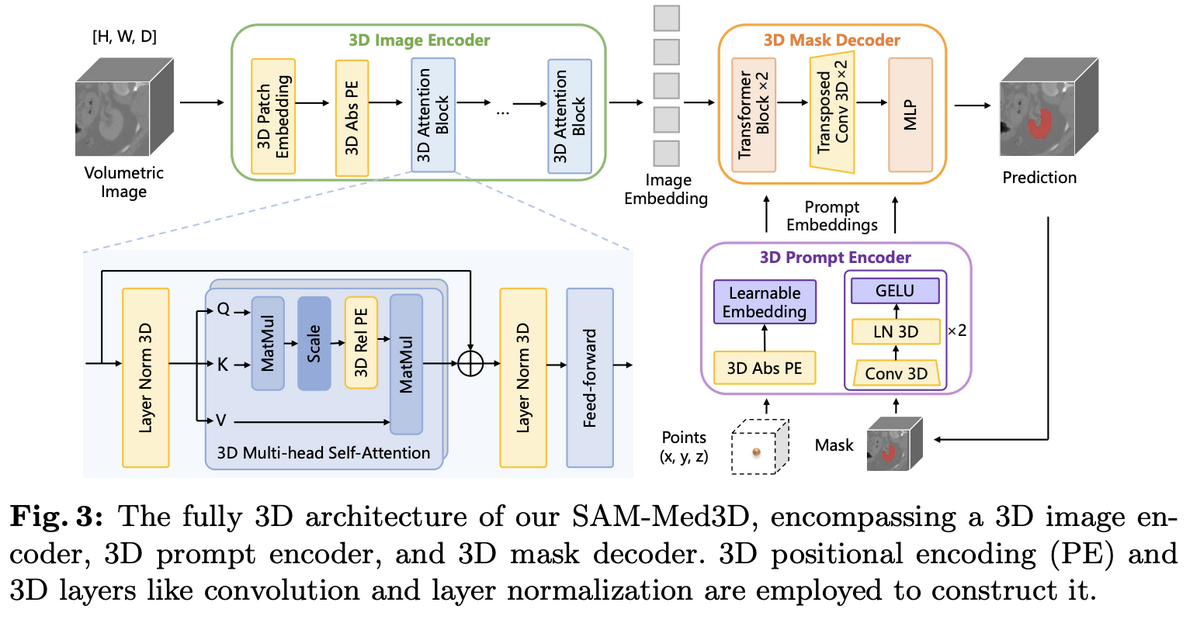

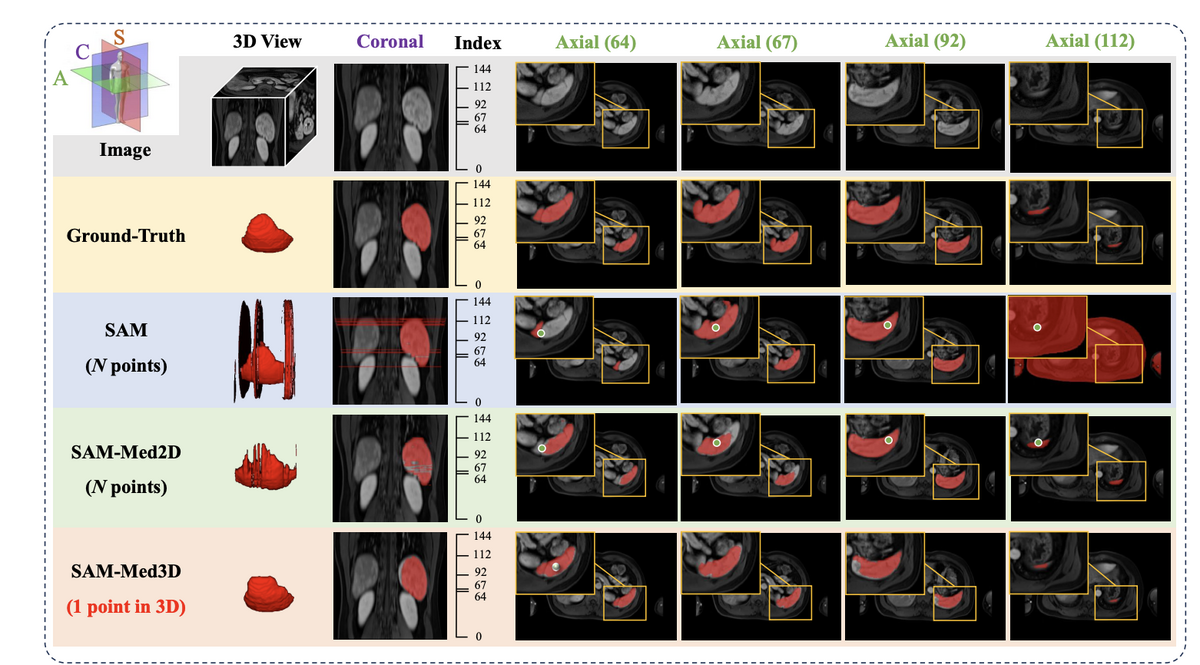

Segment Anything in Medicine: SAM-Med2D and SAM-Med3D

We adapt the Segment Anything Model to the medical domain, delivering universal

promptable segmentation across 14 imaging modalities and 247 anatomical and lesion

categories. SAM-Med2D leverages SA-Med2D-20M for 2D slice segmentation, while

SAM-Med3D introduces a fully native 3D architecture trained on SA-Med3D-140K

(22K volumes, 143K masks) — achieving 60% Dice improvement over SAM with just

a single 3D point prompt.

SAM-Med3D

SAM-Med2D

SA-Med3D-140K

Interactive Segmentation

Explore SAM-Med3D →

04

81.17%

VQA accuracy (TCGA)

18/22

SOTA tasks

176K

VQA training pairs

Clinical AI Systems

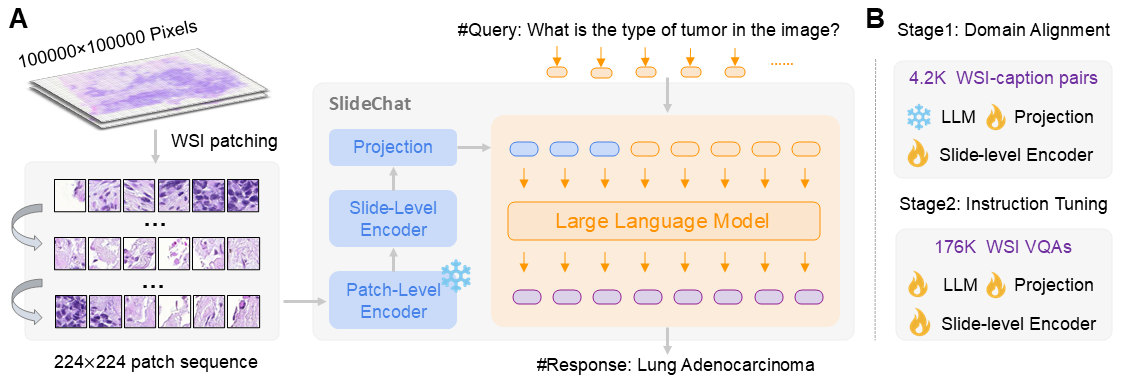

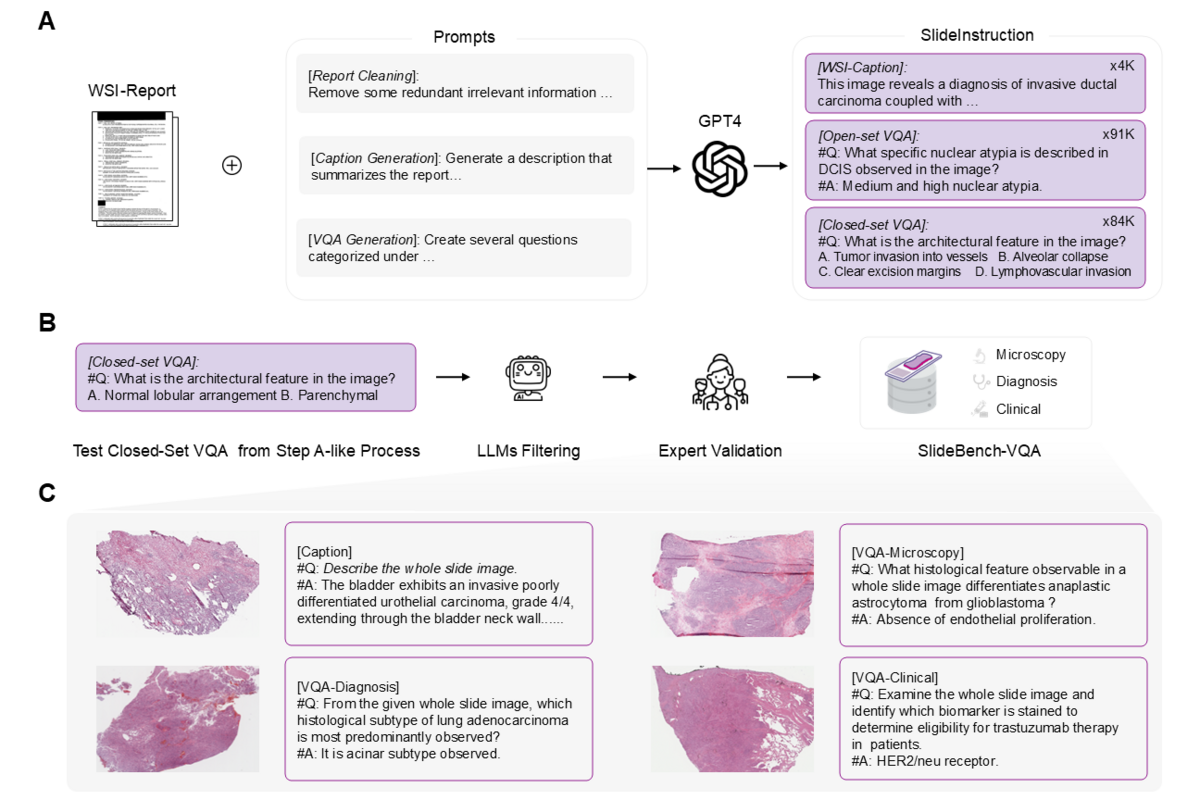

Whole-Slide Pathology Intelligence: SlideChat and the Future of Clinical AI

SlideChat is the first vision-language assistant capable of understanding gigapixel

whole-slide pathology images in their entirety. Trained on SlideInstruction (4.2K WSI

captions + 176K VQA pairs from TCGA), SlideChat achieves SOTA on 18 of 22 tasks on

SlideBench, reaching 81.17% accuracy on SlideBench-VQA (TCGA) — a 13.47% improvement

over the next best model. Accepted at CVPR 2025.

SlideChat (CVPR 2025)

SlideInstruction

SlideBench

WSI understanding

Explore SlideChat →

05

1.4B

parameters (STU-Net-H)

90.06%

mean DSC

104

anatomy classes

Medical Image Segmentation

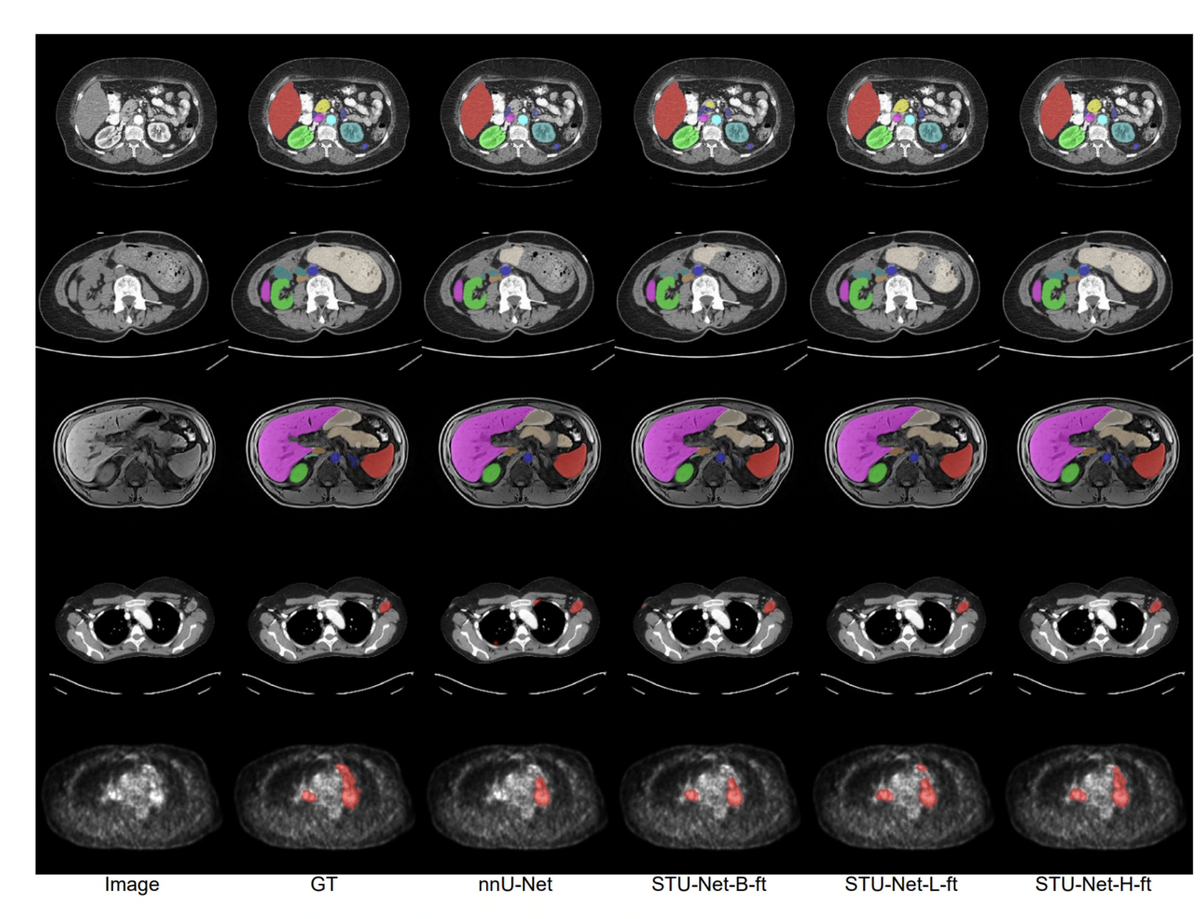

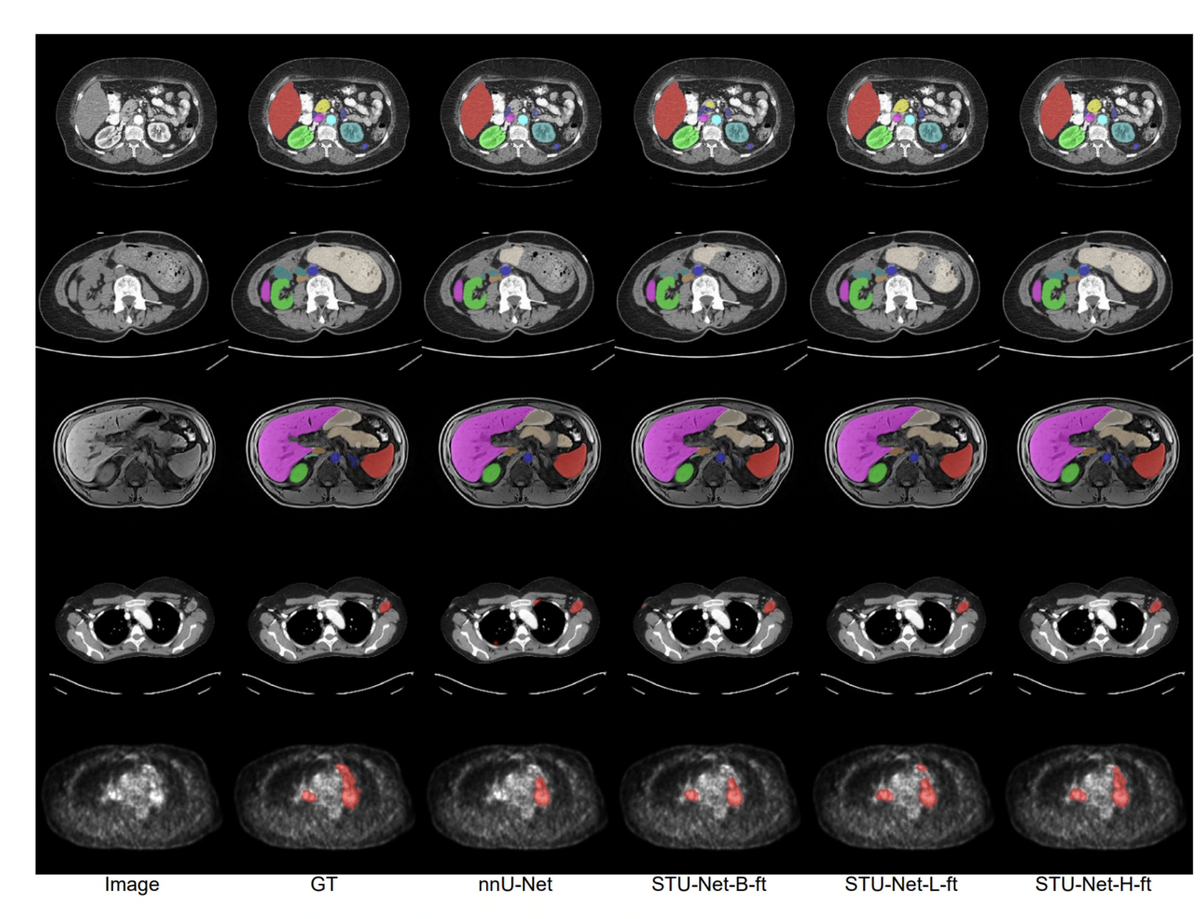

Scaling Laws in Medicine: STU-Net from 14M to 1.4B Parameters

STU-Net establishes scaling laws for 3D medical image segmentation. A family of

four models — S (14M), B (58M), L (440M), and H (1.4B) — are pre-trained on

TotalSegmentator (1,204 CT volumes, 104 anatomy classes). STU-Net-H achieves

90.06% mean DSC, surpassing nnU-Net by 3.3 points and all Transformer competitors.

At 1.4B parameters, a single universal model outperforms five category-specific

specialist models — a decisive step toward a medical segmentation foundation model.

STU-Net-H (1.4B)

TotalSegmentator

MICCAI 2023 Champion

Transfer Learning

Explore STU-Net →

Scholarly Output

Recent Publications

arXiv · 2025

MedQ-Deg: A Multidimensional Benchmark for Evaluating MLLMs Across Medical Image Quality Degradations

arXiv · 2025

UniMedVL: Unifying Medical Multimodal Understanding and Generation through Observation-Knowledge-Analysis

Updates

Latest News

MedQ-Deg released — benchmarking MLLM robustness under medical image degradations

UniMedVL: First unified model for medical image understanding and generation

MedQ-Bench: New benchmark for medical image quality assessment in MLLMs

Welcoming new visiting researchers to GMAI Lab

Join Us

Building General Medical Intelligence

We are a research team at Shanghai AI Laboratory working toward General Medical

Intelligence — AI that understands, reasons, and acts across the full spectrum of

clinical and biomedical tasks. We collaborate with leading universities and hospitals

worldwide. If you share this vision, we have open positions and welcome your inquiry.