Research

Featured Research

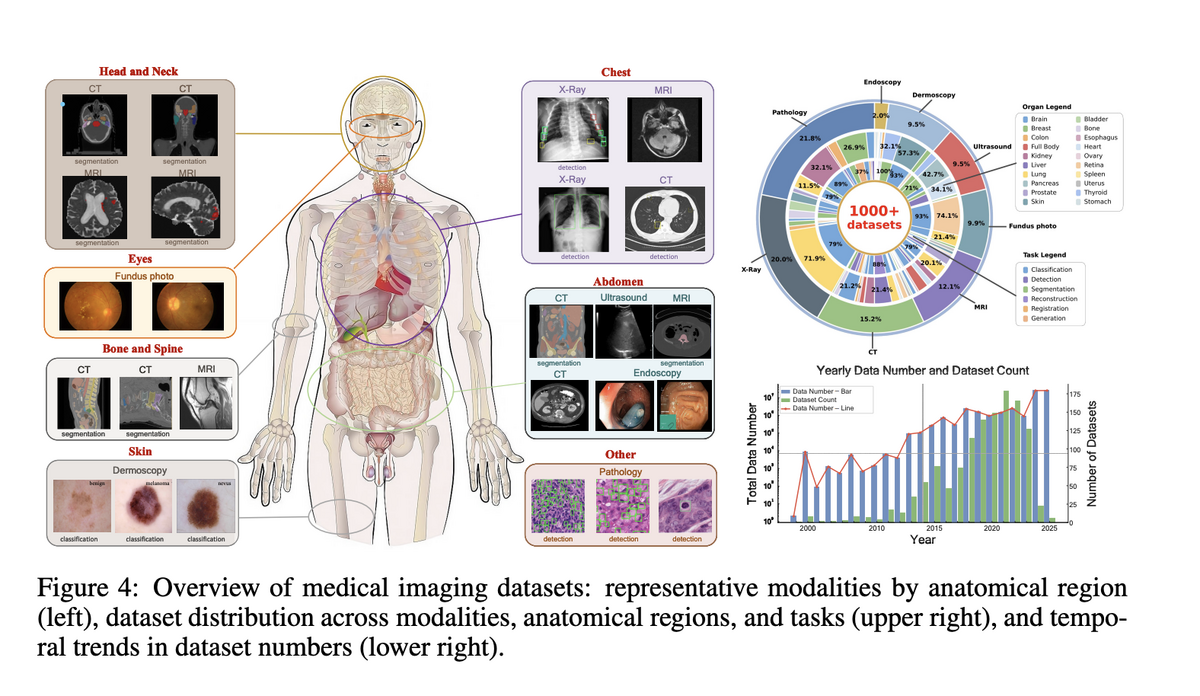

Medical Data Infrastructure

Project Imaging-X: Open Medical Imaging Data Ecosystem

A comprehensive survey and open-source platform integrating 1,000+ open-access medical imaging datasets. We propose a Metadata-Driven Fusion Paradigm (MDFP) to consolidate fragmented small datasets into large, coherent data resources, and build an interactive discovery portal for end-to-end automated dataset integration. Received collaboration interest from top institutions including Stanford University.

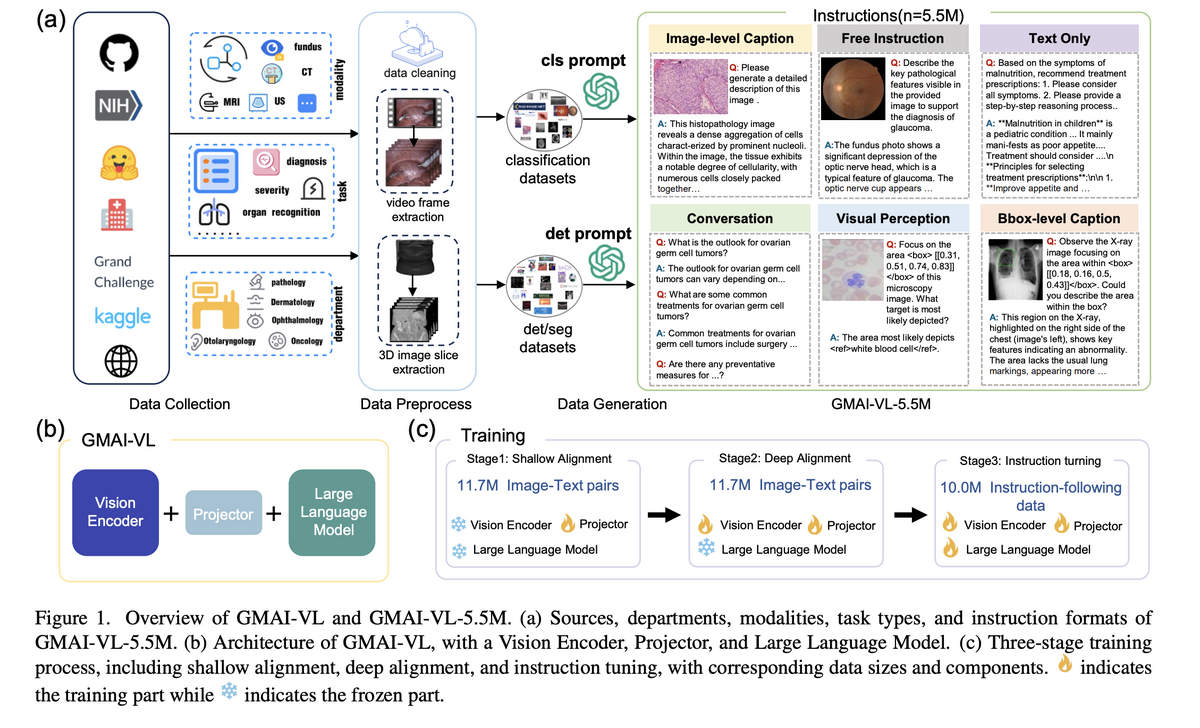

Medical Multimodal AI

GMAI-VL: General Medical Multimodal Vision-Language Model

Development of world-leading medical multimodal vision-language models. GMAI-VL is trained on GMAI-VL-5.5M (5.5M image-text pairs across 18 clinical specialties), while GMAI-VL-R1 introduces reinforcement learning to achieve ~30% average accuracy improvement across eight imaging modalities.

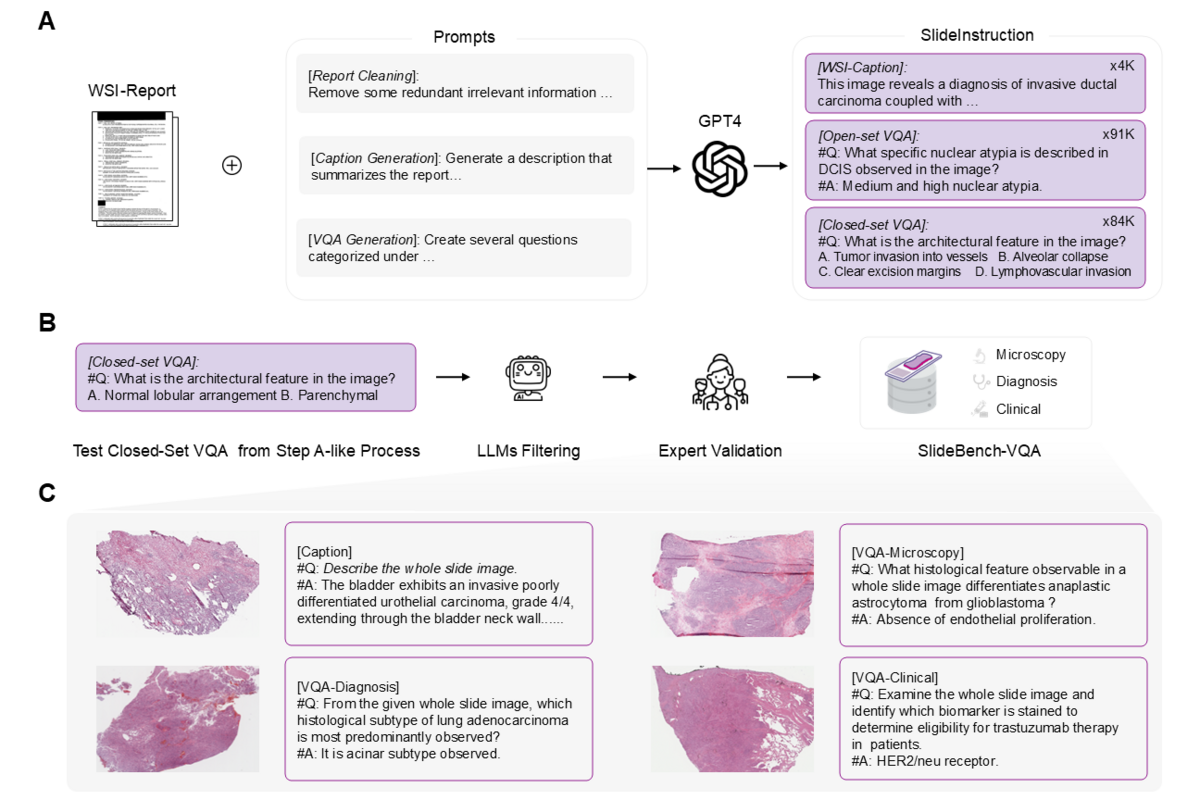

Clinical AI Systems

SlideChat: Vision-Language Assistant for Whole-Slide Pathology

The first vision-language assistant capable of understanding gigapixel whole-slide pathology images in their entirety. Trained on SlideInstruction (4.2K WSI captions, 176K VQA pairs) and evaluated on SlideBench, SlideChat achieves state-of-the-art on 18 of 22 tasks, including 81.17% accuracy on SlideBench-VQA (TCGA). Accepted at CVPR 2025.

Medical Image Segmentation

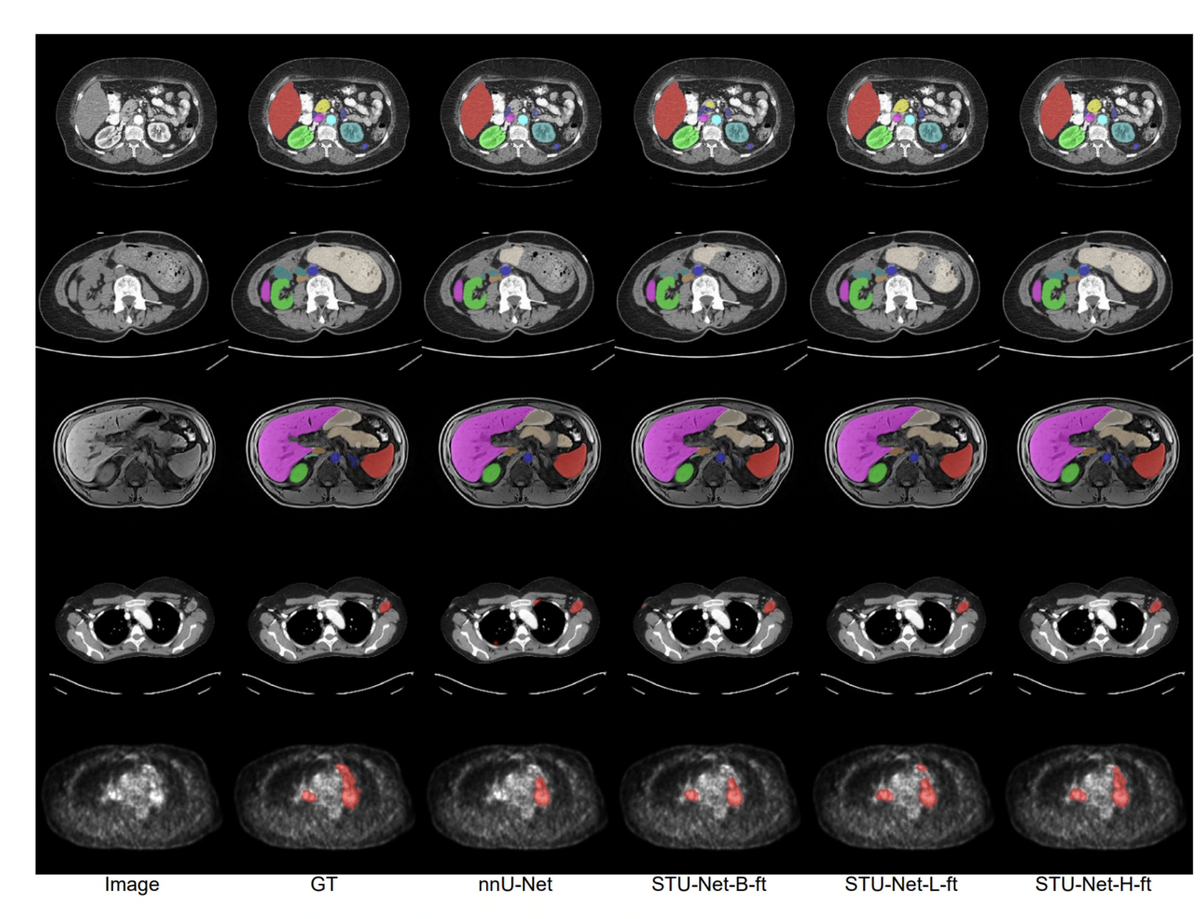

STU-Net: Scalable and Transferable Medical Image Segmentation Models

A family of scalable U-Net models (14M to 1.4B parameters) pre-trained on TotalSegmentator for universal medical image segmentation. STU-Net-H is the largest medical segmentation model to date, achieving 90.06% mean DSC. Won championship at MICCAI 2023 ATLAS and SPPIN challenges; runner-up at AutoPET II.

Medical Image Segmentation

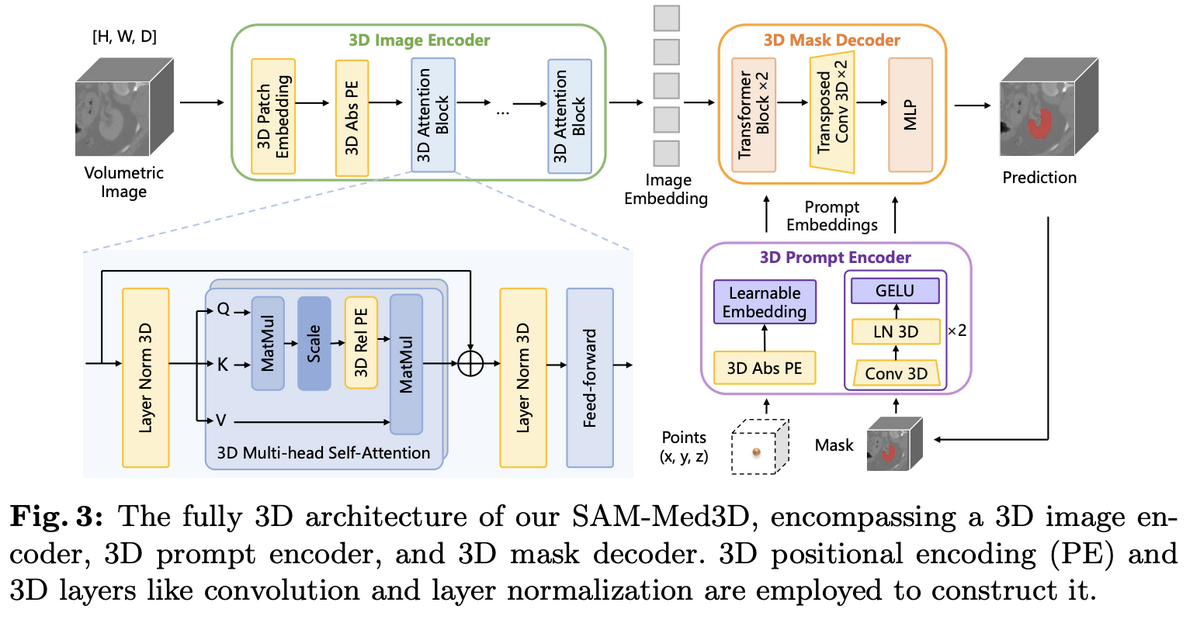

SAM-Med3D: Segment Anything in 3D Medical Images

Adapting the Segment Anything Model to volumetric medical imaging with a fully native 3D architecture. Trained on SA-Med3D-140K (22K volumes, 143K masks across 247 categories), SAM-Med3D achieves 60% Dice improvement over SAM with a single 3D point prompt. The companion dataset SA-Med2D-20M (4.6M images, 19.7M masks) is the largest 2D medical segmentation dataset to date. Published at ECCV 2024 Workshop (Oral) and IEEE TNNLS 2025.

Medical Image Segmentation

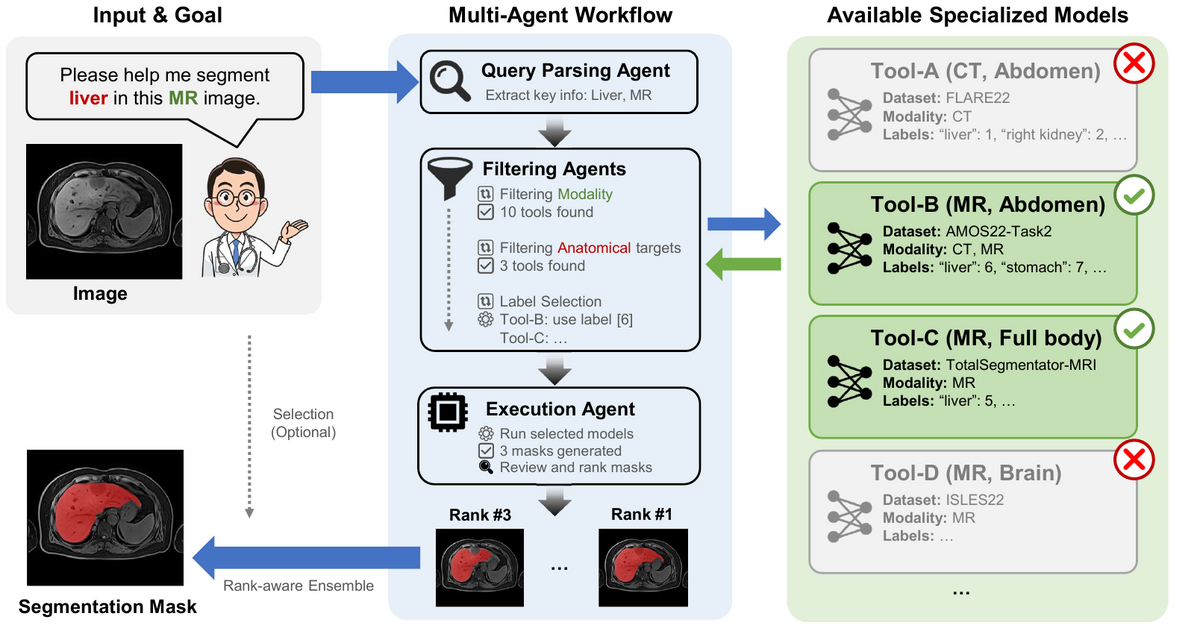

MedSegAgent: Universal Multi-Agent System for Medical Image Segmentation

A multi-agent system that orchestrates specialized dataset-specific segmentation models through natural language instructions. Instead of training a single universal model, MedSegAgent parses free-form requests, performs coarse-to-fine dataset matching, and integrates results from the best-matched models. Supports 23 datasets and 343 segmentation targets across CT, MRI, PET/CT, and ultrasound. Published in IEEE JBHI 2026.

Surgical AI & Robotics

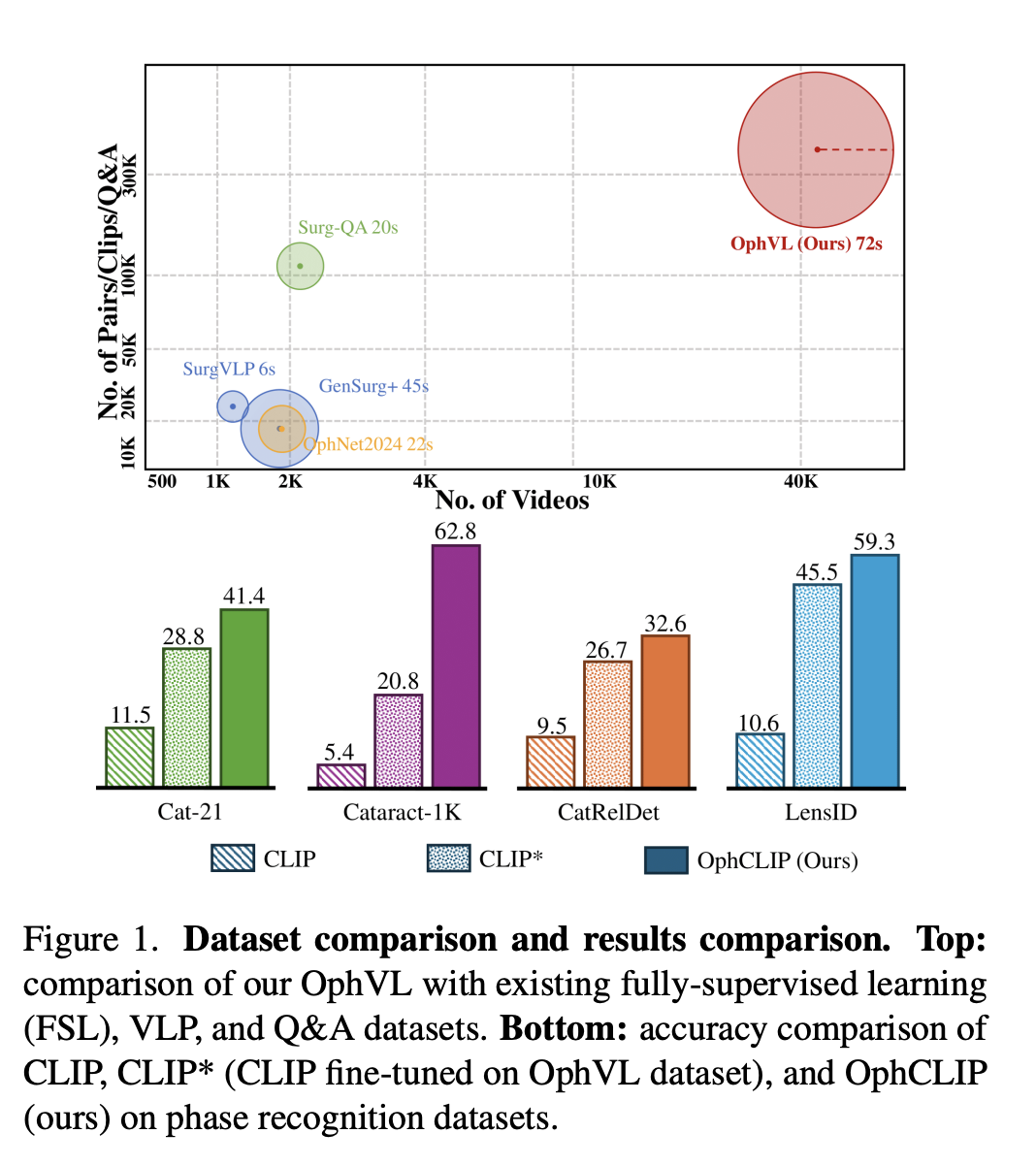

OphCLIP: Hierarchical Retrieval-Augmented Ophthalmic Surgical VLP

A hierarchical retrieval-augmented vision-language pretraining framework for ophthalmic surgical workflow understanding. Trained on OphVL (375K video-text pairs, 7.5K hours, 15× larger than existing surgical VLP datasets), OphCLIP achieves state-of-the-art zero-shot performance on 11 benchmarks for phase recognition and multi-instrument identification. Published at ICCV 2025.

Medical Multimodal AI

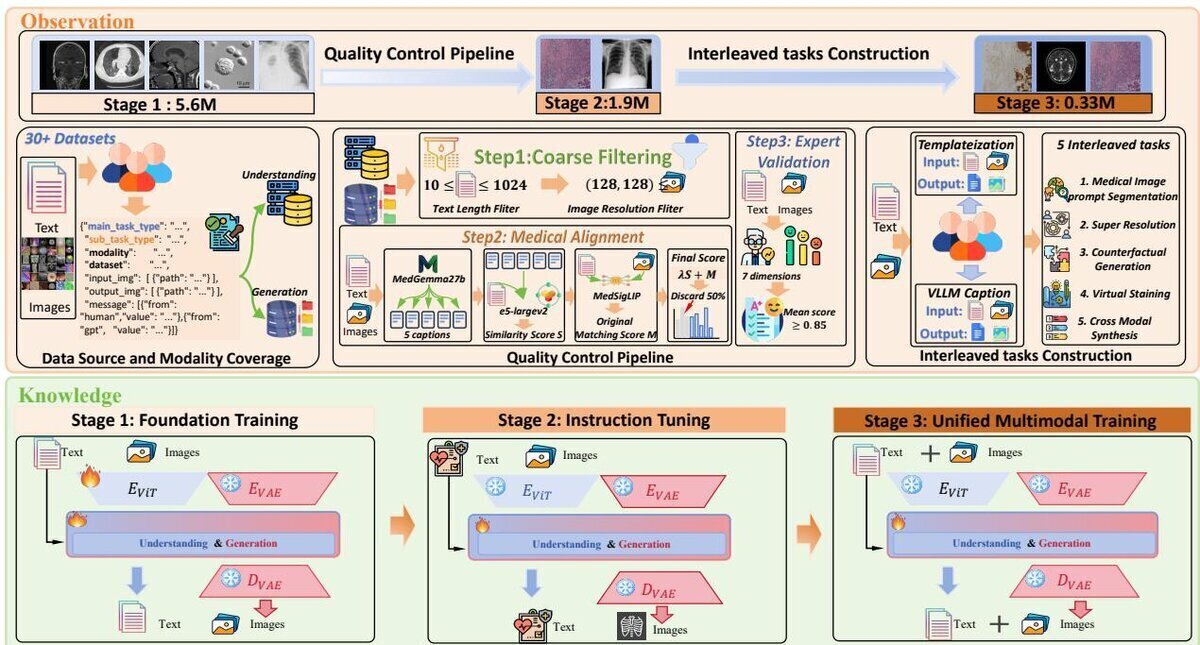

UniMedVL: Unified Medical Multimodal Understanding and Generation

The first medical unified multimodal model that unifies image understanding and generation within a single architecture. Built on UniMed-5M (5.6M+ samples) with Progressive Curriculum Learning, UniMedVL achieves superior performance on 5 medical understanding benchmarks while matching specialized models in generation quality.

Medical Multimodal AI

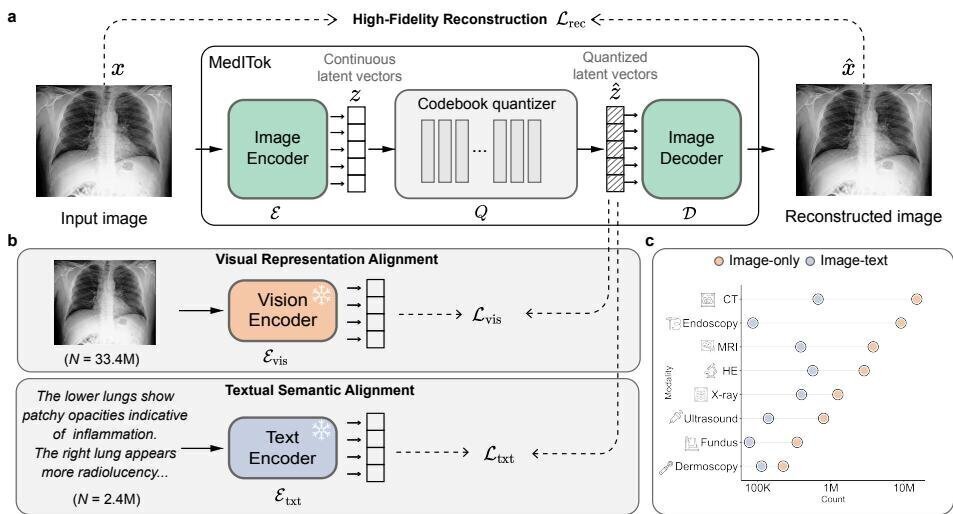

MedITok: Unified Medical Image Tokenizer

The first unified visual tokenizer for medical images that simultaneously preserves fine-grained anatomical structures and rich clinical semantics. Pre-trained on 33M+ medical images across 9 modalities, MedITok achieves state-of-the-art on 30+ benchmarks spanning reconstruction, classification, generation, and VQA.

Medical AI Evaluation

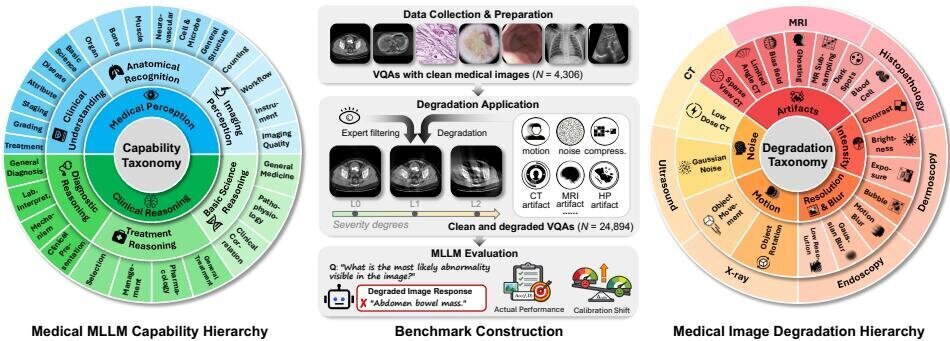

MedQ-Deg: Evaluating MLLMs Across Medical Image Quality Degradations

A comprehensive benchmark for evaluating medical multimodal large language models under clinically realistic image quality degradations. MedQ-Deg spans 18 degradation types, 30 fine-grained capability dimensions, and 7 imaging modalities with 24,894 QA pairs. Evaluation of 40 mainstream MLLMs reveals the AI Dunning-Kruger Effect, where models maintain inappropriately high confidence despite severe accuracy collapse under degradation.

Surgical AI

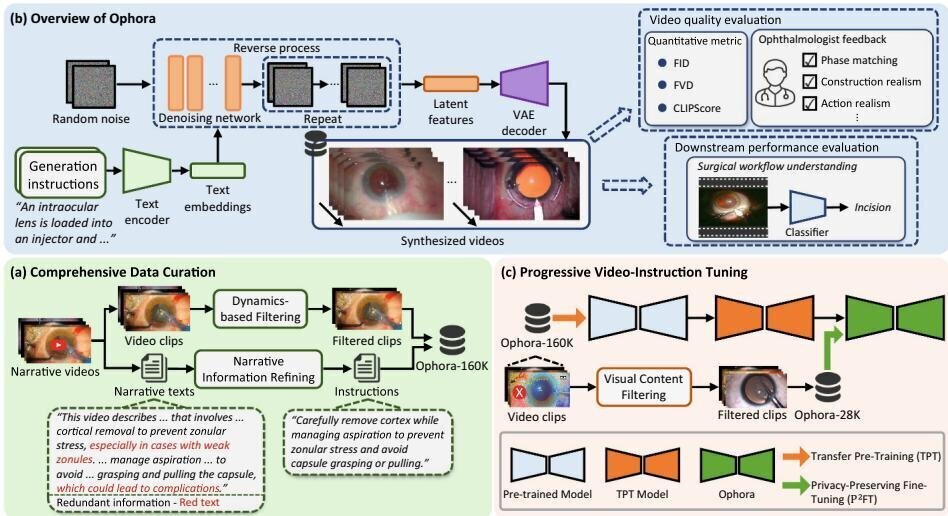

Ophora: Text-Guided Ophthalmic Surgical Video Generation

A pioneering model for generating realistic ophthalmic surgical videos from natural language instructions. Ophora is built on Ophora-160K, a large-scale dataset of over 160K video-instruction pairs curated from narrative surgical videos, and employs Progressive Video-Instruction Tuning to transfer spatial-temporal knowledge from a pre-trained text-to-video model while preserving patient privacy.

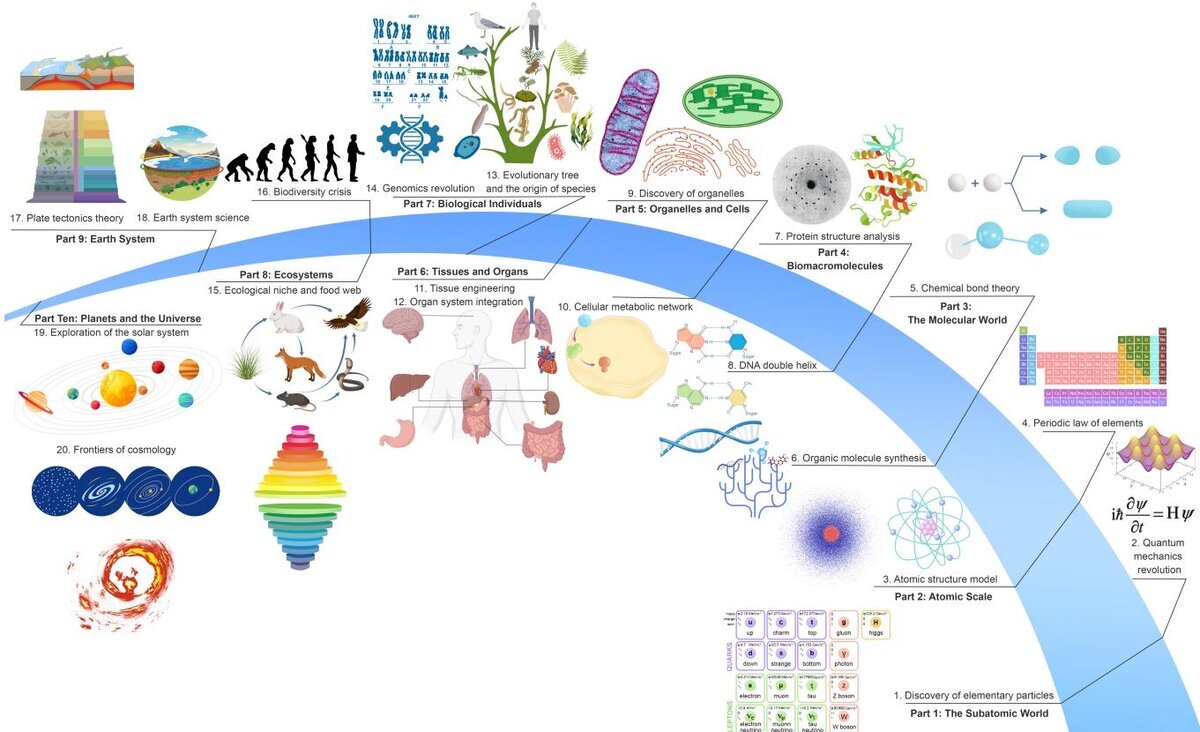

AI for Science

Survey of Scientific Large Language Models

A comprehensive, data-centric survey that reframes the development of Scientific Large Language Models (Sci-LLMs) as a co-evolution between models and their data substrate. Covering 270+ pre-/post-training datasets and 190+ benchmarks, the survey formulates a unified taxonomy of scientific data, traces the shift toward process-oriented evaluation, and outlines the paradigm of closed-loop autonomous scientific agents.

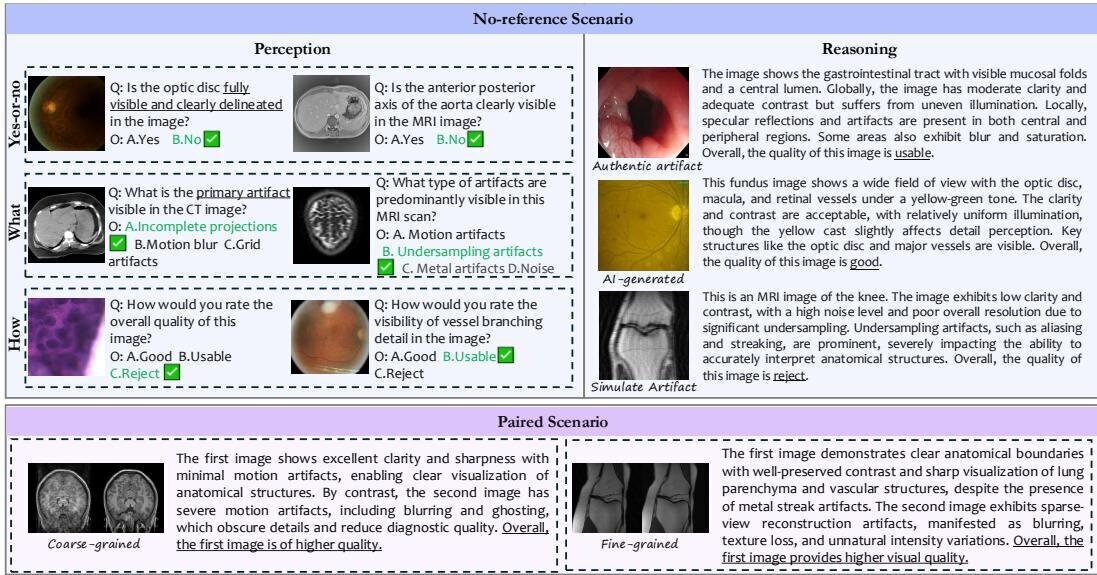

Medical AI Evaluation

MedQ-Bench: Evaluating Medical Image Quality Assessment in MLLMs

The first comprehensive benchmark for evaluating medical image quality assessment capabilities of multimodal large language models. MedQ-Bench establishes a perception-reasoning paradigm spanning 5 imaging modalities and 40+ quality attributes, with 2,600 perceptual queries and 708 reasoning assessments. Evaluation of 14 state-of-the-art MLLMs reveals preliminary but unstable perceptual and reasoning skills insufficient for reliable clinical use.