MedQ-Deg: A Multidimensional Benchmark for Evaluating MLLMs Across Medical Image Quality Degradations

Revealing the AI Dunning-Kruger Effect — Medical MLLMs Maintain Inappropriately High Confidence Despite Severe Accuracy Collapse Under Image Degradation

Multimodal Large Language Models (MLLMs) have demonstrated remarkable performance on medical vision-language benchmarks, in some cases approaching or even surpassing human experts. However, these impressive results largely rely on carefully curated high-quality medical images. In real clinical environments, medical images are frequently degraded due to noise, motion artifacts, or hardware limitations — raising a critical question: can MLLMs remain reliable under such imperfect conditions?

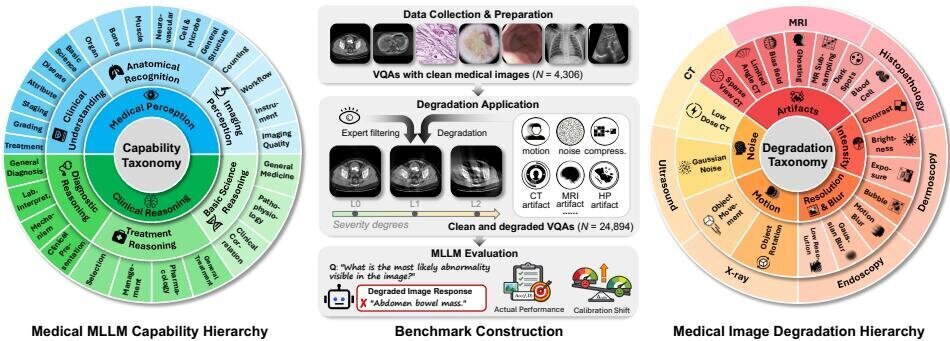

MedQ-Deg addresses this gap with a comprehensive benchmark providing multi-dimensional evaluation spanning 18 distinct degradation types, 30 fine-grained capability dimensions, and 7 imaging modalities, with 24,894 question-answer pairs. Each degradation is implemented at 3 severity degrees calibrated by expert radiologists. The benchmark also introduces the Calibration Shift metric, which quantifies the gap between a model's perceived confidence and actual performance to assess metacognitive reliability under degradation.

Core Highlights

01 — The AI Dunning-Kruger Effect

The study provides large-scale empirical evidence of the AI Dunning-Kruger Effect: medical MLLMs remain markedly overconfident even as their true capabilities deteriorate. Models not only suffer accuracy drops under image degradation, but also exhibit a striking inability to recognize the boundaries of their own competence, maintaining inappropriately high confidence while giving erroneous predictions. This overconfidence systematically widens with increasing degradation severity — all 40 evaluated models exhibit consistently positive and increasing calibration shift from L0 to L2. This metacognitive failure reveals that current models lack the self-awareness required for safe clinical deployment.

02 — Comprehensive Hierarchical Evaluation Framework

MedQ-Deg features a three-tier capability hierarchy grounded in the cognitive workflow of a practising clinician. Tasks are sourced from three top-tier medical benchmarks — GMAI-MMBench, OmniMedVQA, and MedXpertQA — with redundant items merged and the task structure reorganized. The hierarchy spans two high-level capabilities (medical perception and clinical reasoning), six mid-level clinical tasks (anatomical recognition, imaging perception, clinical understanding, basic science reasoning, diagnostic reasoning, and treatment reasoning), and 30 fine-grained skills. Degradations are organized into five physics-grounded categories (artifacts, intensity jitter, resolution & blur, motion interference, and noise) with both general and modality-specific corruptions.

03 — Critical Findings Across 40 MLLMs

The comprehensive evaluation of 40 mainstream MLLMs — spanning 9 commercial models, 21 open-source general models, and 10 medical-specialized models — reveals several critical findings. Most models exhibit severe robustness deficiency with a nonlinear “cliff effect” where perception remains relatively stable until a threshold is reached, after which vision-language integration undergoes catastrophic collapse. Even the best-performing model (InternVL3-Instruct 78B) experiences substantial accuracy drops at L2 severity. Across all model groups, Clinical Understanding is the strongest capability, while reasoning dimensions (Basic Science, Diagnosis, Treatment) are critically weak, with Treatment planning being the most catastrophic where multiple open-source models collapse to near-zero accuracy.

MedQ-Deg establishes the most comprehensive characterization of medical MLLM behavior under image quality variations to date. By revealing the AI Dunning-Kruger Effect and providing multidimensional analysis across capability dimensions, degradation categories, and imaging modalities, MedQ-Deg drives progress toward medical MLLMs that are robust and trustworthy in real clinical practice. The benchmark demonstrates that current models universally fail to calibrate their confidence under degradation, posing severe risks for clinical deployment where overconfident erroneous inferences may prevent necessary human oversight.

Key Contributions

- Constructed MedQ-Deg — a systematic benchmark featuring a three-tier hierarchical evaluation framework with 24,894 QA pairs across 18 degradation types, 30 fine-grained capability dimensions, and 7 imaging modalities, with severity degrees calibrated by expert radiologists.

- Introduced Calibration Shift, a quantitative metric providing large-scale empirical evidence of the AI Dunning-Kruger Effect: medical MLLMs remain markedly overconfident even as their true capabilities deteriorate, and this overconfidence systematically widens with increasing degradation severity.

- Conducted extensive evaluation of 40 mainstream MLLMs spanning commercial, open-source general, and medical-specialized models, providing the most comprehensive characterization of medical MLLM behavior under image quality variations across multiple capability dimensions and degradation categories.

Authors

Jiyao Liu*, Junzhi Ning*, Chenglong Ma*, Wanying Qu, Jianghan Shen, Siqi Luo, Jinjie Wei, Jin Ye, Pengze Li, Tianbin Li, Jiashi Lin, Hongming Shan, Xinzhe Luo, Xiaohong Liu, Lihao Liu, Junjun He†, Ningsheng Xu†