MedSegAgent: A Universal and Scalable Multi-Agent System for Instructive Medical Image Segmentation

Orchestrating specialized segmentation models through natural language instructions, coarse-to-fine dataset matching, and multi-model result integration

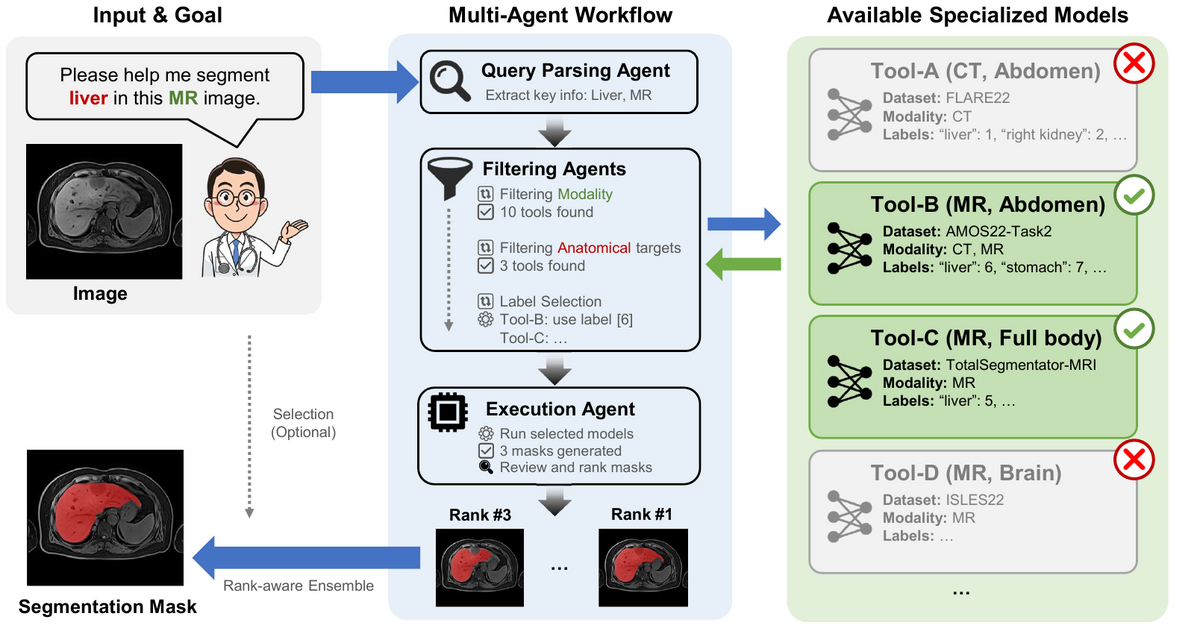

Medical image segmentation has seen remarkable advances with universal models like STU-Net and SAM-Med3D, yet no single model can cover the full diversity of clinical segmentation tasks across all modalities and anatomical targets. MedSegAgent takes a fundamentally different approach: instead of training one monolithic model, it orchestrates a library of specialized, dataset-specific models through a multi-agent system driven by natural language.

Given a free-form segmentation request such as "Please help me segment liver in this MR image", MedSegAgent parses the query to extract modality and target information, then performs a three-stage coarse-to-fine filtering: modality filtering narrows candidates from the full library, anatomy filtering identifies the relevant body region, and label selection pinpoints the exact segmentation target. The matched models are executed in parallel, and their outputs are integrated via a rank-aware ensemble strategy.

The current system integrates 23 datasets and supports 343 segmentation targets across CT, MRI, PET/CT, and ultrasound modalities. This architecture is inherently scalable: adding a new segmentation capability requires only registering a new dataset metadata entry and its trained model, with no retraining of the orchestration system.

Key Features

Universal & Scalable

Handles diverse medical image segmentation tasks through natural language instructions. Adding new modalities or targets requires only a JSON metadata entry — no retraining of the core system.

Precise Automation

Coarse-to-fine filtering (modality → anatomy → label) automatically selects the most suitable segmentation model from the library, without manual intervention.

Enhanced Robustness

Multi-model integration and rank-aware ensemble improve reliability. When multiple candidate models match a query, their outputs are combined to reduce individual model failures.

Supported Datasets (23 total)

| Dataset | Modalities | Body Region | Representative Targets |

|---|---|---|---|

| TotalSegmentator v2 | CT | Whole-body | 117 structures (organs, vessels, bones, brain) |

| TotalSegmentator MRI | MRI | Whole-body | 56 structures (organs, vessels, spine, muscles) |

| AutoPET | PET/CT | Whole-body | Whole-body tumor sites |

| SegRap2023 | CT | Head & neck | 45 OAR structures, GTVp, GTVnd |

| BraTS21 | MRI | Head & neck | Whole tumor, tumor core, enhancing tumor |

| AMOS22 | MRI, CT | Abdomen | 15 abdominal and pelvic structures |

| MM-WHS | MRI, CT | Heart | Cardiac chambers, myocardium, great vessels |

| KiTS23 | CT | Abdomen | Kidneys, renal tumors, renal cysts |

| + 15 more datasets covering thorax, abdomen, head & neck regions… | |||

MedSegAgent demonstrates that multi-agent orchestration offers a practical and scalable alternative to training ever-larger monolithic segmentation models. By decoupling language understanding from segmentation execution, it turns the growing ecosystem of specialized medical models into a unified, language-driven segmentation service. The system currently supports 23 datasets and 343 targets, and is designed so that every new trained model immediately expands the system's capabilities without retraining.

Key Contributions

- Proposed MedSegAgent, the first multi-agent system for instructive medical image segmentation driven by natural language, integrating 23 datasets and 343 segmentation targets.

- Designed a coarse-to-fine dataset matching pipeline (modality → anatomy → label) that automatically selects the best segmentation model for any given query.

- Introduced rank-aware ensemble integration that combines outputs from multiple matched models to improve segmentation robustness and reliability.

- Built an extensible architecture where new segmentation capabilities can be added via a single JSON metadata entry, requiring no retraining of the orchestration system.

Authors

Ziyan Huang, Haoyu Wang, Jin Ye, Yuanfeng Ji, Xiaowei Hu, Lihao Liu, Zhikai Yang, Wei Li, Ming Hu, Yanzhou Su, Tianbin Li, Yun Gu, Shaoting Zhang, Yu Qiao, Lixu Gu, Junjun He

IEEE Journal of Biomedical and Health Informatics (JBHI), 2026