Ophora: A Large-Scale Data-Driven Text-Guided Ophthalmic Surgical Video Generation Model

A Pioneering Model That Generates Realistic Ophthalmic Surgical Videos Following Natural Language Instructions, Built on 160K+ Video-Instruction Pairs

In ophthalmic surgery, developing AI systems capable of interpreting surgical videos and predicting subsequent operations requires numerous ophthalmic surgical videos with high-quality annotations, which are difficult to collect due to privacy concerns and labor consumption. Text-guided video generation (T2V) emerges as a promising solution to overcome this issue by generating ophthalmic surgical videos based on surgeon instructions.

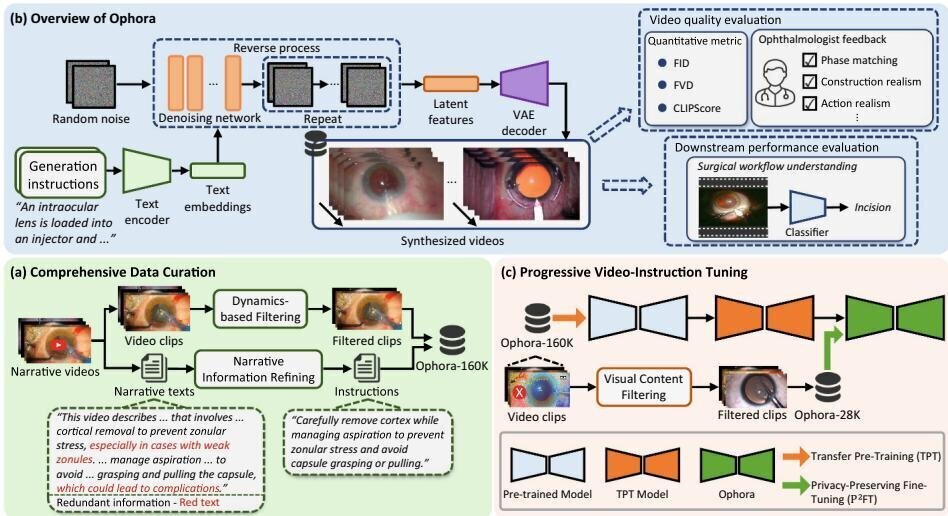

Ophora is a pioneering model that can generate ophthalmic surgical videos following natural language instructions. The approach first proposes a Comprehensive Data Curation pipeline to convert narrative ophthalmic surgical videos into a large-scale, high-quality dataset comprising over 160K video-instruction pairs (Ophora-160K). Then, a Progressive Video-Instruction Tuning scheme transfers rich spatial-temporal knowledge from a T2V model pre-trained on natural video-text datasets for privacy-preserved ophthalmic surgical video generation. Experiments demonstrate that Ophora generates realistic and reliable ophthalmic surgical videos, validated through both quantitative analysis and ophthalmologist feedback.

Core Highlights

01 — Ophora-160K: Large-Scale Video-Instruction Dataset

Ophora-160K contains 162,185 video clip-instruction pairs extracted from 9,819 narrative videos of ophthalmic surgery, with an average clip duration of 5.54 seconds. The dataset is constructed through a Comprehensive Data Curation pipeline that includes Narrative Information Refining using Qwen2.5-72B to remove irrelevant information from captions and transform them into generation instructions, and Dynamics-Based Filtering using PySceneDetect to filter clips with extreme temporal dynamics. Low-resolution clips below 720×480 are further removed to ensure quality.

02 — Progressive Video-Instruction Tuning with Privacy Preservation

Built on CogVideoX-2b as the backbone, Ophora employs a two-stage training approach. Transfer Pre-training uses the entire Ophora-160K dataset for continual pre-training on the denoising network while keeping the T5 encoder and VAE frozen, with timestep sub-interval sampling across GPUs for training efficiency. Privacy-Preserving Fine-Tuning uses Qwen2.5-VL-72B to detect and filter videos containing sensitive information (subtitles, watermarks), resulting in Ophora-28K — a privacy-preserved subset of over 28K video-instruction pairs used for fine-tuning to enhance privacy while avoiding overwriting previously learned spatial-temporal knowledge.

03 — Superior Generation Quality and Downstream Impact

Ophora achieves the best performance across all metrics compared to state-of-the-art surgical video generation models Endora and Bora, with the lowest FID and FVD scores and the highest CLIPScore of 39.19 demonstrating superior video-text consistency. Ophthalmologist evaluation across seven criteria and 600 generated videos confirms realistic surgical scenes with proper instruments and coherent actions. As a data augmentation tool, Ophora-synthesized videos boost downstream ophthalmic surgical workflow classification on OphNet, with MViTv2 achieving the highest improvement in phase-level Top-1 accuracy on the test set from 37.92% to 42.24%.

Ophora establishes a pioneering approach for text-guided ophthalmic surgical video generation, demonstrating significant potential for developing general surgical AI systems. By combining a comprehensive data curation pipeline with progressive video-instruction tuning, Ophora generates realistic and reliable ophthalmic videos based on surgeon instructions while preserving patient privacy. The generated videos serve as effective augmented data for improving downstream ophthalmic surgical workflow understanding, addressing the critical shortage of annotated surgical video data in ophthalmology.

Key Contributions

- Proposed a Comprehensive Data Curation pipeline to convert narrative ophthalmic surgical videos into Ophora-160K, a large-scale, high-quality dataset comprising over 162K video-instruction pairs from 9,819 source videos.

- Introduced Progressive Video-Instruction Tuning, a two-stage approach (transfer pre-training + privacy-preserving fine-tuning) that transfers spatial-temporal knowledge from a T2V model pre-trained on natural videos for privacy-preserved ophthalmic surgical video generation.

- Demonstrated state-of-the-art video generation quality with the best FID, FVD, and CLIPScore across all evaluated models, validated by both quantitative analysis and ophthalmologist feedback across seven realism criteria.

- Validated the downstream impact of synthesized videos on ophthalmic surgical workflow understanding, achieving the highest performance boost on OphNet phase and operation classification tasks.

Authors

Wei Li, Ming Hu, Guoan Wang, Lihao Liu, Kaijing Zhou, Junzhi Ning, Xin Guo, Zongyuan Ge, Lixu Gu, Junjun He