A Survey of Scientific Large Language Models: From Data Foundations to Agent Frontiers

A comprehensive, data-centric synthesis reviewing 270+ pre-/post-training datasets and 190+ benchmarks across all major scientific disciplines

Scientific Large Language Models (Sci-LLMs) are transforming how knowledge is represented, integrated, and applied in scientific research, yet their progress is shaped by the complex nature of scientific data. This survey presents a comprehensive, data-centric synthesis that reframes the development of Sci-LLMs as a co-evolution between models and their underlying data substrate. It formulates a unified taxonomy of scientific data and a hierarchical model of scientific knowledge, emphasizing the multimodal, cross-scale, and domain-specific challenges that differentiate scientific corpora from general natural language processing datasets.

The survey systematically reviews recent Sci-LLMs — from general-purpose foundations to specialized models across diverse scientific disciplines — alongside an extensive analysis of over 270 pre-/post-training datasets and over 190 benchmark datasets. It demonstrates why Sci-LLMs pose distinct demands: heterogeneous, multi-scale, uncertainty-laden corpora that require representations preserving domain invariance and enabling cross-modal reasoning.

On evaluation, the survey traces a shift from static exams toward process- and discovery-oriented assessments with advanced evaluation protocols. These data-centric analyses highlight persistent issues in scientific data development and discuss emerging solutions involving semi-automated annotation pipelines and expert validation. Finally, the work outlines a paradigm shift toward closed-loop systems where autonomous agents based on Sci-LLMs actively experiment, validate, and contribute to a living, evolving knowledge base.

Core Highlights

01 — Unified Data Taxonomy and Knowledge Hierarchy

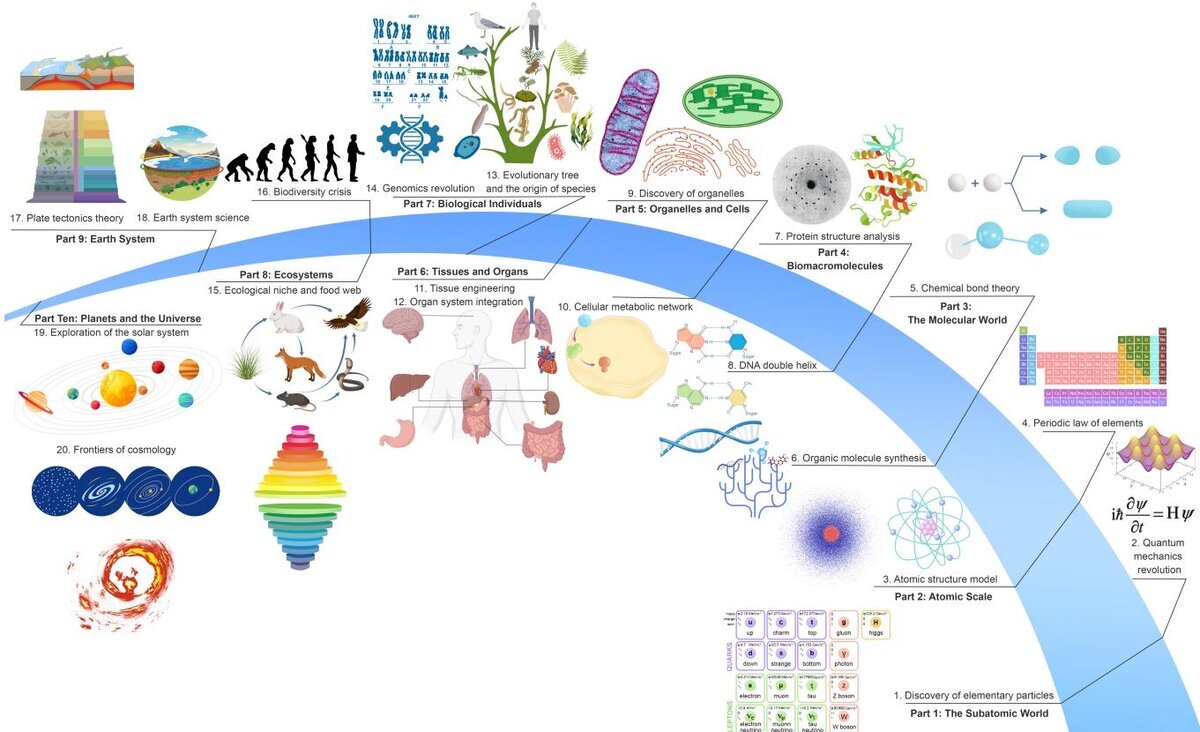

The survey formulates a unified taxonomy of scientific data spanning six major categories: textual formats, visual data, symbolic representations, structured data, time-series data, and multi-omics integration. Complementing this is a hierarchical structure of scientific knowledge organized across five levels — factual, theoretical, methodological and technological, modeling and simulation, and insight — with dynamic interactions and evolution between them. This framework provides a principled lens for understanding why scientific corpora demand fundamentally different treatment than general NLP datasets.

02 — Comprehensive Model and Dataset Analysis Across Disciplines

The work provides the most extensive survey to date of Sci-LLMs across physics, chemistry, materials science, life sciences, astronomy, and earth science. It systematically catalogues over 270 pre-/post-training datasets and reviews both general-purpose Sci-LLMs and domain-specific models. The analysis reveals that Sci-LLMs pose distinct demands — heterogeneous, multi-scale, uncertainty-laden corpora — that require representations preserving domain invariance and enabling cross-modal reasoning across diverse scientific modalities.

03 — From Static Benchmarks to Agent-Driven Scientific Discovery

Examining over 190 evaluation benchmarks, the survey traces a paradigm shift from static exam-style assessments toward process- and discovery-oriented evaluations with advanced protocols, including LLM/Agent-as-a-Judge and test-time learning approaches. Crucially, the work outlines a new paradigm of closed-loop systems where autonomous scientific agents based on Sci-LLMs actively experiment, validate, and contribute to living knowledge bases — encompassing multi-agent collaboration, tool use, self-evolving agents, and autonomous scientific discovery.

This survey provides a roadmap for building trustworthy, continually evolving artificial intelligence systems that function as a true partner in accelerating scientific discovery. By reframing Sci-LLM development as a co-evolution between models and their data substrate, the work highlights persistent issues in scientific data development — including data traceability crises, scientific data latency, and the lack of AI-readiness — while pointing toward emerging solutions involving semi-automated annotation pipelines, expert validation, and operating system-level interaction protocols for scientific data ecosystems.

Key Contributions

- Presented a data-centric synthesis that reframes Sci-LLM development as a co-evolution between models and their underlying data substrate, with a unified taxonomy of scientific data and a hierarchical model of scientific knowledge.

- Systematically reviewed Sci-LLMs across six major scientific disciplines (physics, chemistry, materials science, life sciences, astronomy, earth science), cataloguing 270+ pre-/post-training datasets and analyzing their distinctive demands.

- Examined 190+ evaluation benchmarks and traced the shift from static exams toward process- and discovery-oriented assessments, including LLM/Agent-as-a-Judge evaluation protocols.

- Outlined a paradigm shift toward closed-loop scientific agents that actively experiment, validate, and contribute to living knowledge bases, providing a comprehensive roadmap for trustworthy AI-driven scientific discovery.

Authors

Ming Hu, Chenglong Ma, Wei Li, Wanghan Xu, Jiamin Wu, Jucheng Hu, Tianbin Li, Guohang Zhuang, Jiaqi Liu, Yingzhou Lu, Ying Chen, Chaoyang Zhang, Cheng Tan, Jie Ying, Guocheng Wu, et al.

In collaboration with 80+ researchers from 20+ global institutions including Shanghai AI Laboratory, Monash University, Fudan University, Shanghai Jiao Tong University, CUHK, UCL, Stanford, Virginia Tech, Johns Hopkins, University of Cambridge, HKU, Caltech, and others.

Corresponding authors: Zongyuan Ge, Shixiang Tang, Junjun He, Chunfeng Song, Lei Bai, Bowen Zhou.