UniMedVL: Unifying Medical Multimodal Understanding and Generation

The First Unified Medical Model That Couples Image Understanding and Generation within a Single Architecture via Observation-Knowledge-Analysis

Medical diagnosis fundamentally requires models that can process multimodal medical inputs — images, patient histories, symptom descriptions — and produce diverse outputs including textual reports and visual content such as annotations or segmentation masks. However, existing medical AI models fragment this unified process: image understanding models interpret images without producing visual outputs, while image generation models produce visual outputs but cannot provide textual explanations.

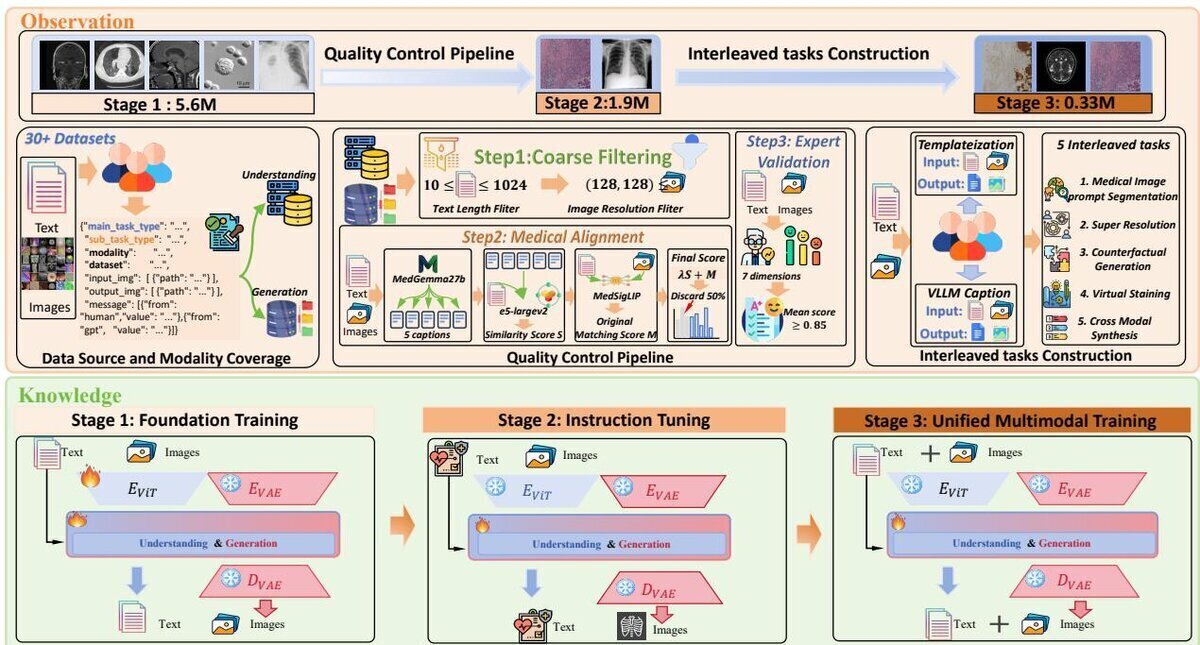

UniMedVL addresses this gap through a multi-level framework called Observation-Knowledge-Analysis (OKA). At the observation level, we construct UniMed-5M, a dataset comprising over 5.6 million samples that reformat diverse unimodal data into multimodal pairs across 8 imaging modalities. At the knowledge level, we propose Progressive Curriculum Learning, where models simultaneously learn medical multimodal understanding and generation knowledge. At the analysis level, we introduce UniMedVL — the first medical unified model that handles both image understanding and generation within a single architecture without manually reloading model checkpoints.

Core Highlights

01 — UniMed-5M: Large-Scale Multimodal Medical Dataset

UniMed-5M contains over 5.6 million multimodal medical samples spanning 8 primary imaging modalities, constructed through a rigorous quality control pipeline. Raw datasets undergo coarse filtering for resolution and text quality, followed by medical alignment scoring using MedGemma-27b and MedSigLIP to ensure clinical relevance. Expert validation by five medical professionals confirms data quality with strong inter-rater agreement (κ > 0.80). The dataset reformats diverse unimodal data into unified multimodal input-output pairs, including 5 interleaved tasks: medical image prompt segmentation, super-resolution, counterfactual generation, virtual immunohistochemistry staining, and cross-modal synthesis.

02 — Progressive Curriculum Learning

UniMedVL is trained through a principled three-stage curriculum that progressively builds from basic medical pattern recognition to sophisticated multimodal capabilities. Stage 1 — Foundation Training establishes basic medical image understanding and generation capabilities on the entire UniMed-5M dataset. Stage 2 — Instruction Tuning improves instruction-following via Distilled Chain of Thought (DCOT) for understanding tasks and Caption Augmented Generation (CAG) for generation tasks. Stage 3 — Unified Multimodal Training fine-tunes on complex interleaved tasks that combine understanding and generation within unified sequences, enabling bidirectional knowledge sharing between the two pathways.

03 — State-of-the-Art Unified Performance

With 14B total parameters (7B activated during inference), UniMedVL achieves superior performance on 5 medical image understanding benchmarks among unified models — scoring 85.8% on OmniMedVQA (vs. 74.4% for HealthGPT-L14) and 60.75% on GMAI-MMBench — while simultaneously matching specialised models in generation quality across 8 medical imaging modalities with an average gFID of 96.29 and a BioMedCLIP score of 0.706. Crucially, ablation studies confirm that joint training consistently outperforms single-task variants, validating that understanding and generation capabilities reinforce each other through the unified architecture.

UniMedVL establishes a new paradigm for unified medical AI by simultaneously performing image understanding and generation within a single model. Validated through extensive experiments on over 5 million medical samples, UniMedVL demonstrates that the OKA framework — combining large-scale multimodal data construction, progressive curriculum learning, and a unified architecture — enables bidirectional knowledge sharing that improves both comprehension and generation quality. This work represents a critical step toward truly integrated medical AI systems where understanding and generation capabilities synergistically enhance clinical workflows.

Key Contributions

- Constructed UniMed-5M — a large-scale dataset with over 5.6M multimodal medical samples spanning 8 imaging modalities, reformatting diverse unimodal datasets into unified multimodal input-output pairs with rigorous quality control.

- Devised Progressive Curriculum Learning, a three-stage training paradigm (foundation training → instruction tuning → unified multimodal training) that systematically builds cross-modal understanding-generation capabilities with bidirectional knowledge transfer.

- Introduced UniMedVL, the first medical unified multimodal model that processes multimodal inputs and generates both textual and visual outputs within a single architecture — without requiring separate model checkpoints for different task types.

- Achieved state-of-the-art performance on medical VQA benchmarks among unified models while matching specialised generation models across 8 imaging modalities, demonstrating that joint training yields mutual enhancement rather than compromise.

Authors

Junzhi Ning*, Wei Li*, Cheng Tang*, Jiashi Lin, Chenglong Ma, Chaoyang Zhang, Jiyao Liu, Ying Chen, Shujian Gao, Lihao Liu, Yuandong Pu, Huihui Xu, Chenhui Gou, Ziyan Huang, Yi Xin, Qi Qin, Zhongying Deng, Diping Song, Bin Fu, Guang Yang, Yuanfeng Ji, Tianbin Li, Yanzhou Su, Jin Ye, Shixiang Tang, Ming Hu, Junjun He

* Equal contribution (co-first authors)